News

- November, 2019: We have received the Replicability Stamp for our code! (see Downloads).

- August, 2019: Presentation available (see Downloads).

- July, 2019: Poster available (see Downloads).

- May, 2019: Paper available (see Downloads).

- May, 2019: Web launched.

Abstract

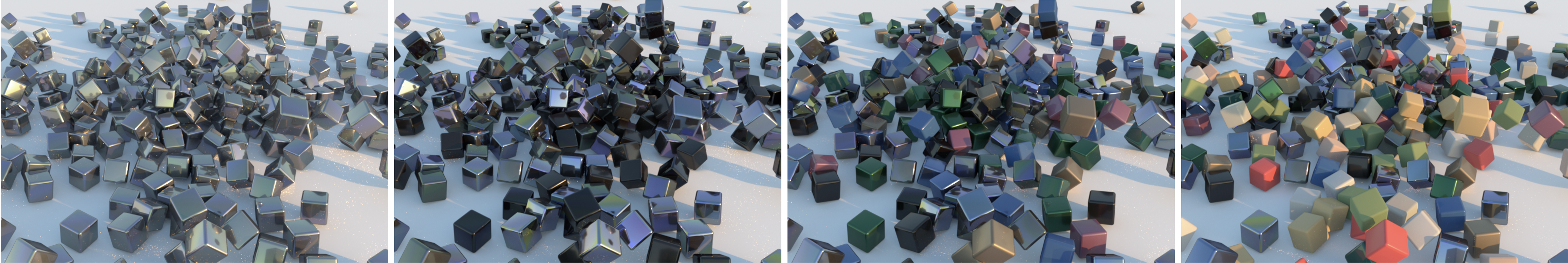

We present a model to measure the similarity in appearance between different materials, which correlates with human similarity judgments. We first create a database of 9,000 rendered images depicting objects with varying materials, shape and illumination. We then gather data on perceived similarity from crowdsourced experiments; our analysis of over 114,840 answers suggests that indeed a shared perception of appearance similarity exists. We feed this data to a deep learning architecture with a novel loss function, which learns a feature space for materials that correlates with such perceived appearance similarity. Our evaluation shows that our model outperforms existing metrics. Last, we demonstrate several applications enabled by our metric, including appearance-based search for material suggestions, database visualization, clustering and summarization, and gamut mapping.

Downloads

- Paper [PDF, 81.3 MB]

- Paper low-res [PDF, 2.3 MB]

- Presentation [PPTX, 120.3 MB]

- Poster [PDF, 73.5 MB]

- Supplementary [ZIP, 65.9 MB]

- Dataset

- Code and data

Bibtex

Related

- 2017: Attribute-Preserving Gamut Mapping of Measured BRDFs

- 2017: Learning Icons Appearance Similarity

- 2017: Intuitive Editing of Visual Appearance From Real-World Datasets

- 2016: An Intuitive Control Space for Material Appearance

Acknowledgements

We want to thank the anonymous reviewers for their encouraging and insightful feedback on the manuscript, Ibon Guillen for his invaluable help using Mitsuba; Miguel Galindo, Ibon Guillen, Adrian Jarabo, and Julio Marco for their help setting up the scenes, and the members of the Graphics and Imaging Lab for the discussions about the paper. Sandra Malpica was supported by a DGA predoctoral grant (period 2018-2022). Ana Serrano was supported by an FPI grant from the Spanish Ministry of Economy and Competitiveness, and a Nvidia Graduate Fellowship. Elena Garces is additionally supported by a Juan de la Cierva Fellowship. This project has received funding from the European Research Council (ERC) under the European Union's Horizon 2020 research and innovation programme (CHAMELEON project, grant agreement No 682080) and the Spanish Ministry of Economy and Competitiveness (projects TIN2016-78753-P, and TIN2016-79710-P).